Activity

- Capturing

- Perceiving

- Routing

- Acting

- Verifying

- Recovering

- Requesting Human

DexHoldem

DexHoldem

A Texas Hold'em-style tabletop benchmark that couples semantic grounding, sequential state tracking, and fine-grained multi-finger manipulation on a ShadowHand-UR10e platform.

Abstract

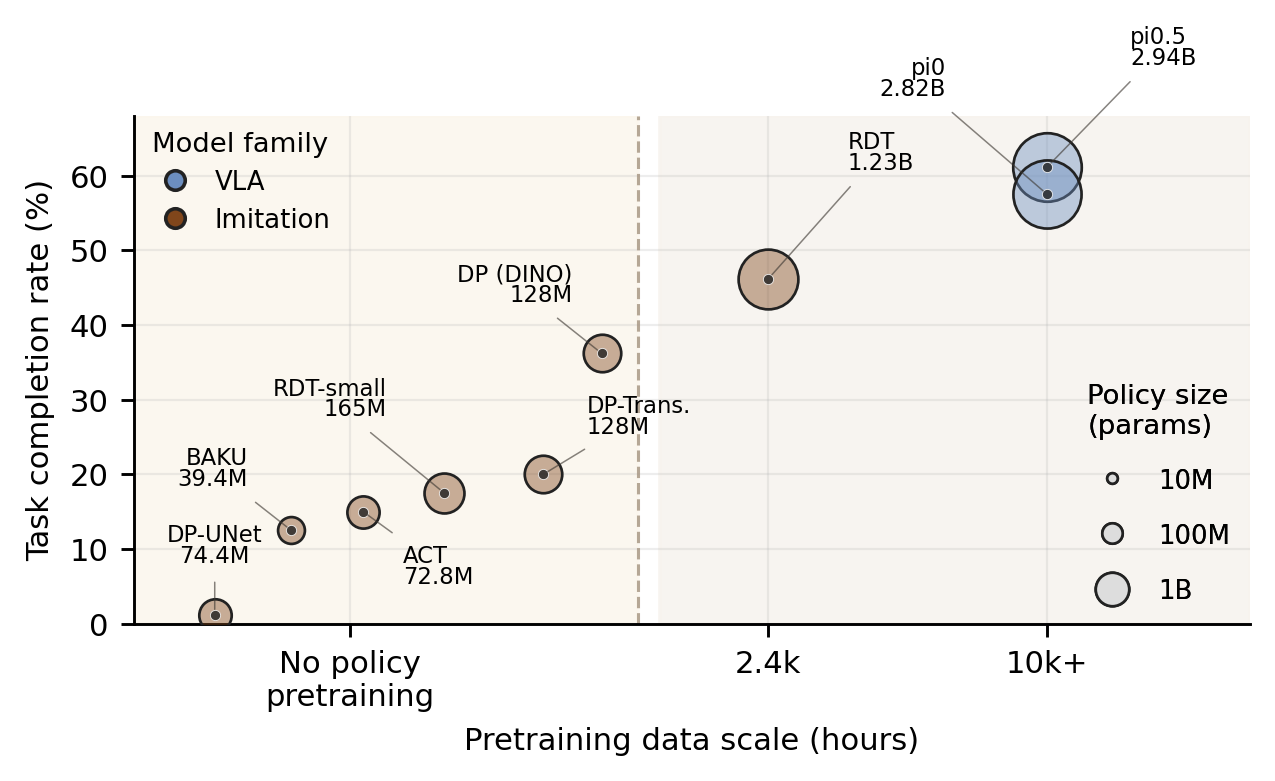

Current embodied-agent benchmarks often emphasize semantic grounding and planning while relying on simulation, coarse actions, or gripper-centric manipulation. Dexterous-manipulation benchmarks capture contact-rich control, but usually evaluate isolated motor skills without instruction-conditioned visual grounding or long-horizon state tracking.

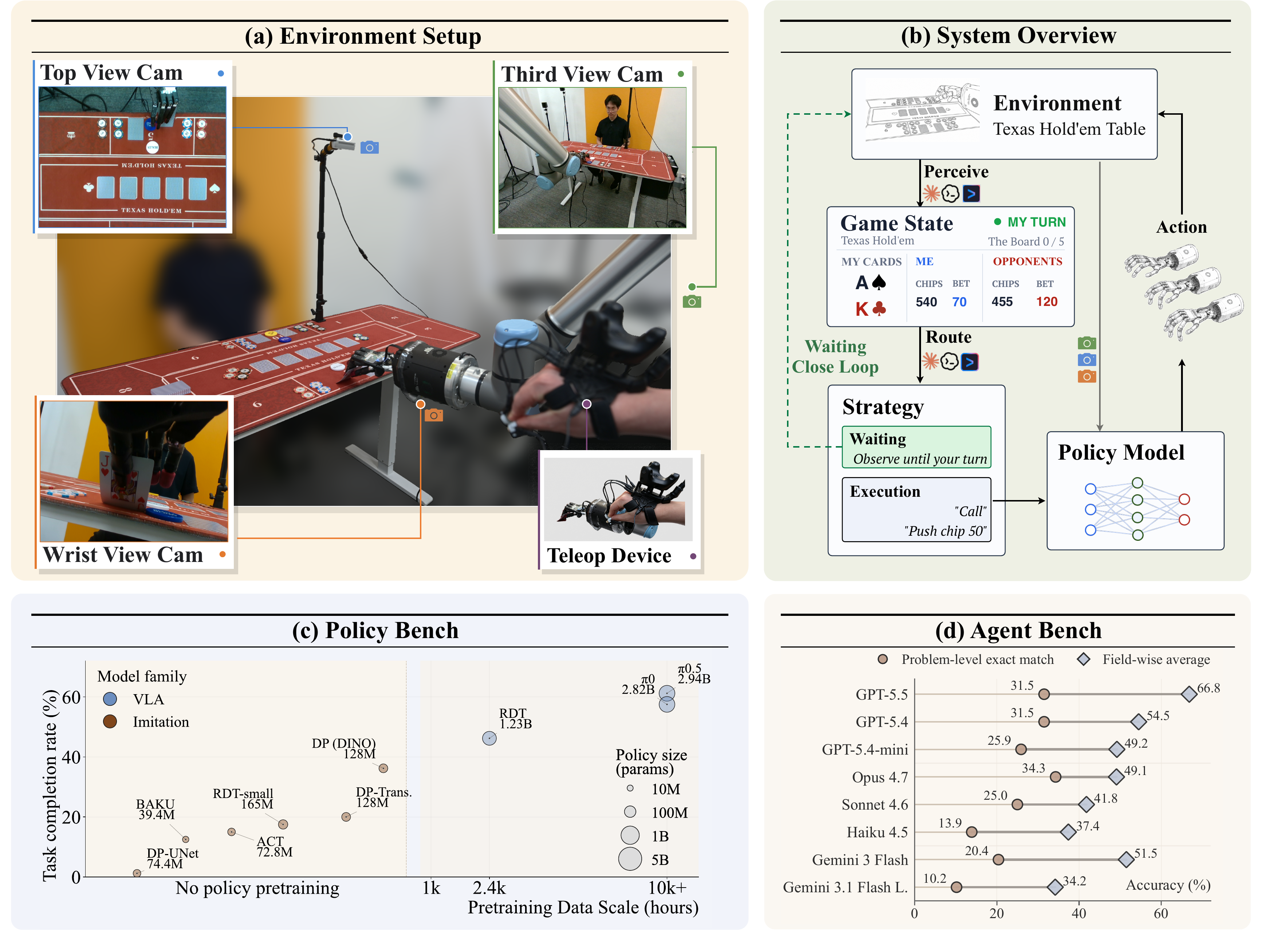

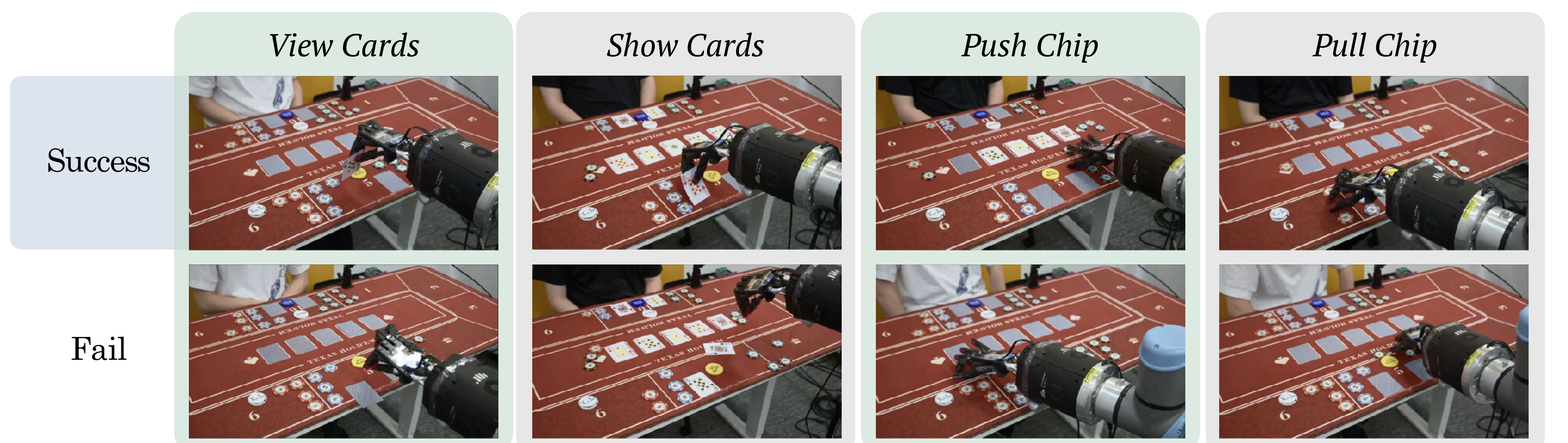

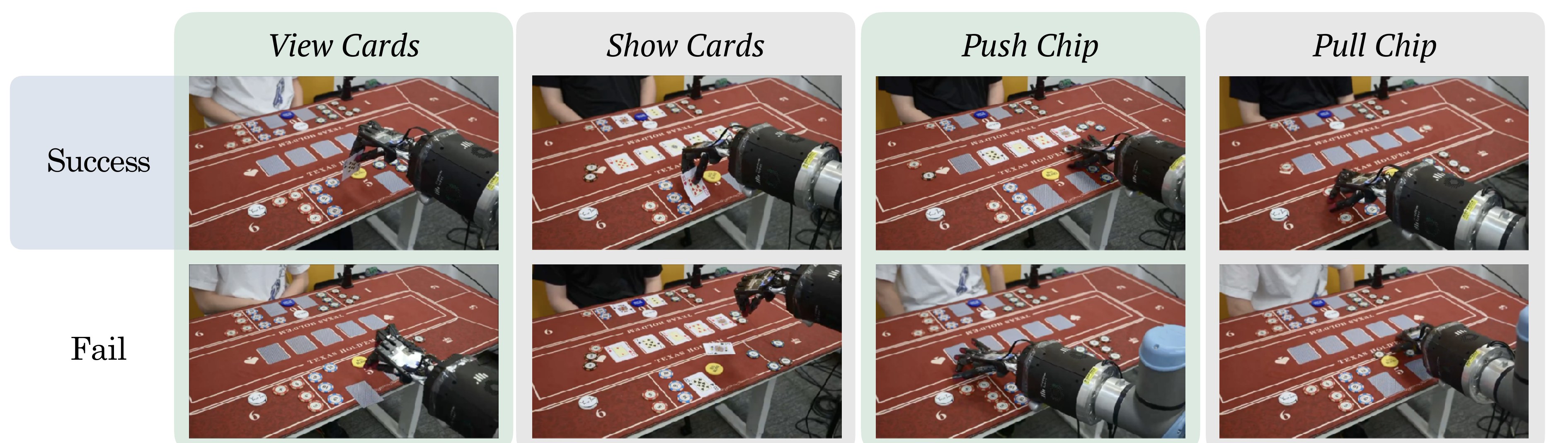

We introduce DexHoldem, a real-world ShadowHand benchmark that uses Texas Hold'em-style tabletop interaction to couple semantic grounding, sequential state tracking, and fine-grained multi-finger manipulation. The benchmark contains 1,470 teleoperated demonstrations across 14 atomic card and chip primitives, a completed physical policy evaluation, and a 36-problem perception benchmark for parsing turn state, cards, chips, bets, robot activity, recovery conditions, and outcomes.

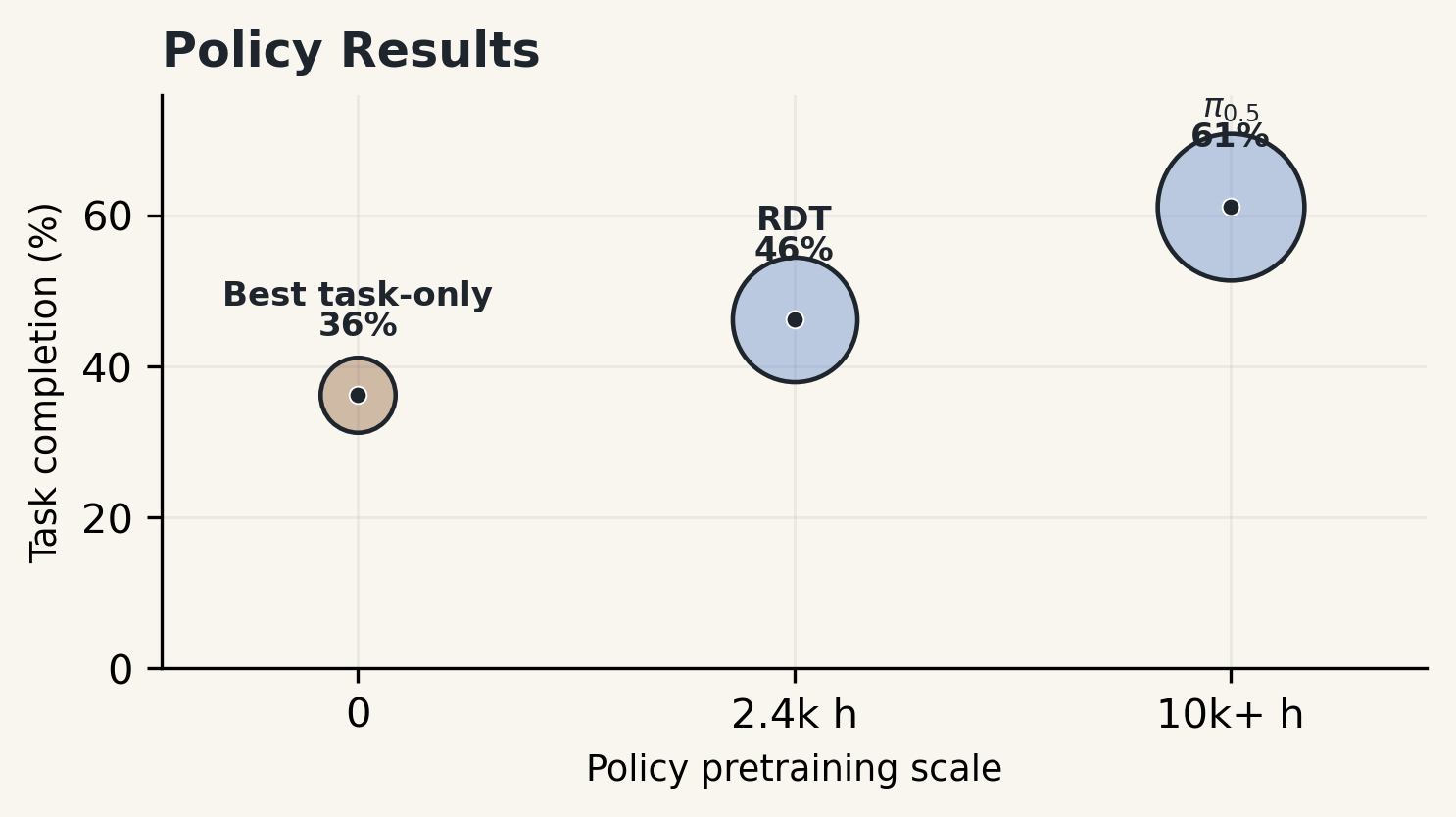

In the current benchmark, the strongest policy reaches 47.5% scene-preserving success and 61.2% task completion, while perception remains bottlenecked by complete state recovery: Opus 4.7 obtains the best strict problem-level score at 34.3%, and GPT 5.5 obtains the best field-wise average at 66.8%. Three full-loop case studies further expose how waits, recovery, human-help requests, and repeated primitive dispatches accumulate in real hand-level play.

Benchmark Scope

DexHoldem is not a benchmark of gambling strategy. It uses Hold'em-style tabletop interaction as a controlled real-world setting where semantic state, object layout, long-horizon progress tracking, and dexterous physical execution all matter.

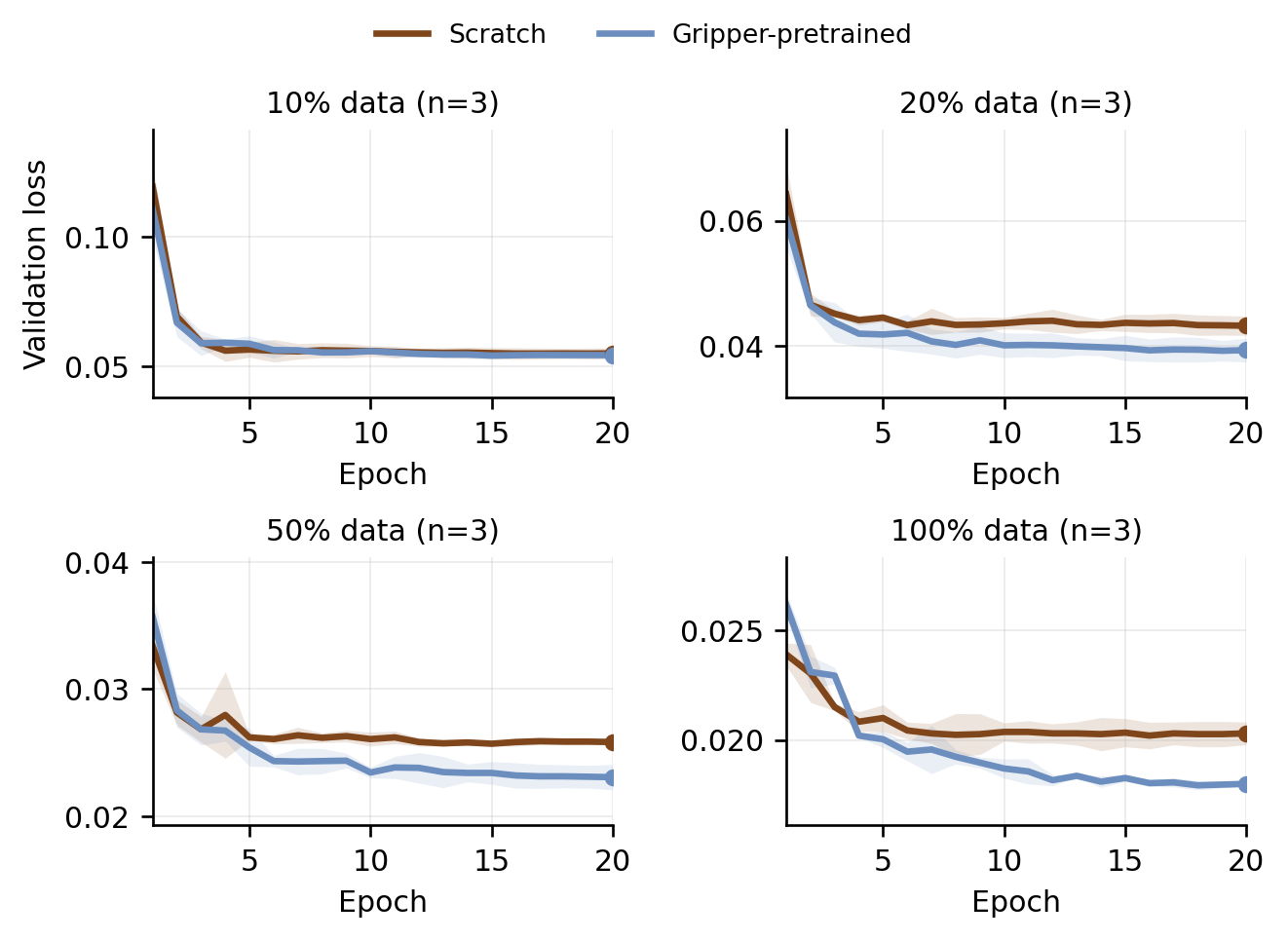

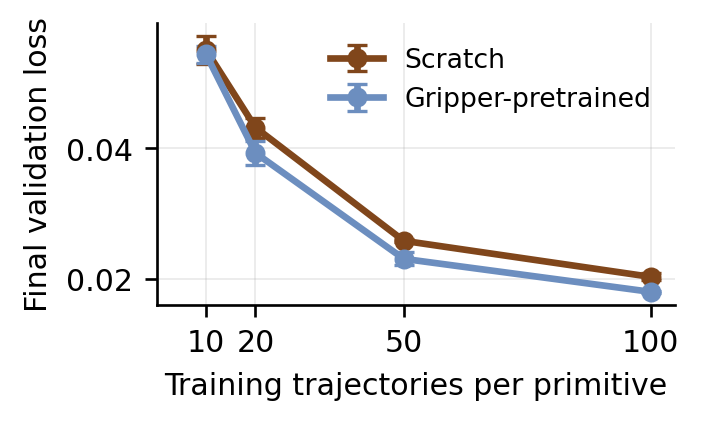

14 language-instructed atomic primitives over cards and chips, each with 105 teleoperated demonstrations, a fixed 100/5 split, and a shared 30-dimensional joint-position action space.

Policy Details 0236 real deployment states test whether a perceiver can recover structured game-state memory from one current agent-view capture and predecessor context.

Agent Evaluation 03A capture-parse-route-execute-verify loop composes visual state parsing, legal high-level actions, primitive execution, recovery, and human intervention when safe continuation fails.

System Details

The policy benchmark isolates atomic dexterous execution from game-level decision making. Each policy is evaluated under the same 80-rollout physical primitive schedule and scored with a four-level rubric that separates task completion from scene preservation.

| Robot Platform | ShadowHand on UR10e, real-world tabletop |

|---|---|

| Observations | Top-down, third-person, wrist RGB-D views plus arm and hand proprioception |

| Policy Data | 100 train and 5 validation trajectories per primitive |

| Perception Data | 36 labeled tabletop-state problems with deterministic semantic checks |

| Evaluation | SPSR/TCR for completed policy rollouts; strict exact-match and field-wise perception accuracy; protocol specification for system rollouts |

Embodied System

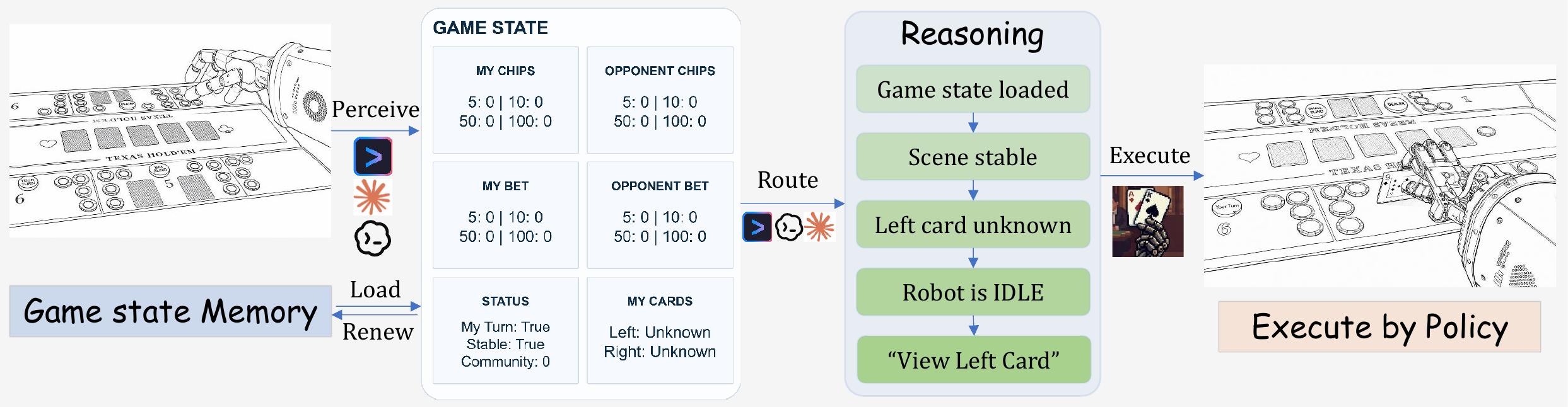

DexHoldem composes an agent-view perception loop with dexterous-policy primitives. The agent captures a tabletop image, renews structured game-state memory, routes through deterministic workflow gates, and dispatches a robot primitive only when physical motion is required.

Activity

Game State Memory

Agentic Perception Bench

Each perception problem samples one real tabletop state from system-level deployment. The perceiver parses only the current agent-view capture, optionally uses predecessor states as context, and writes the fixed structured schema for loop stage, turn ownership, blinds, cards, chip inventories, current bets, outcome, and uncertainty.

Turn ownership, blind context, and wait-vs-act decisions.

Acting, atom-idle, cached sequence continuation, and activity-stage tracking.

Retryable recovery, down states, and human intervention.

Cards, chips, bets, and turn state for downstream routing.

Robot-held card identity using current visual evidence and predecessor context.

Win or lose judgment from visible cards, cached state, or opponent fold.

Results

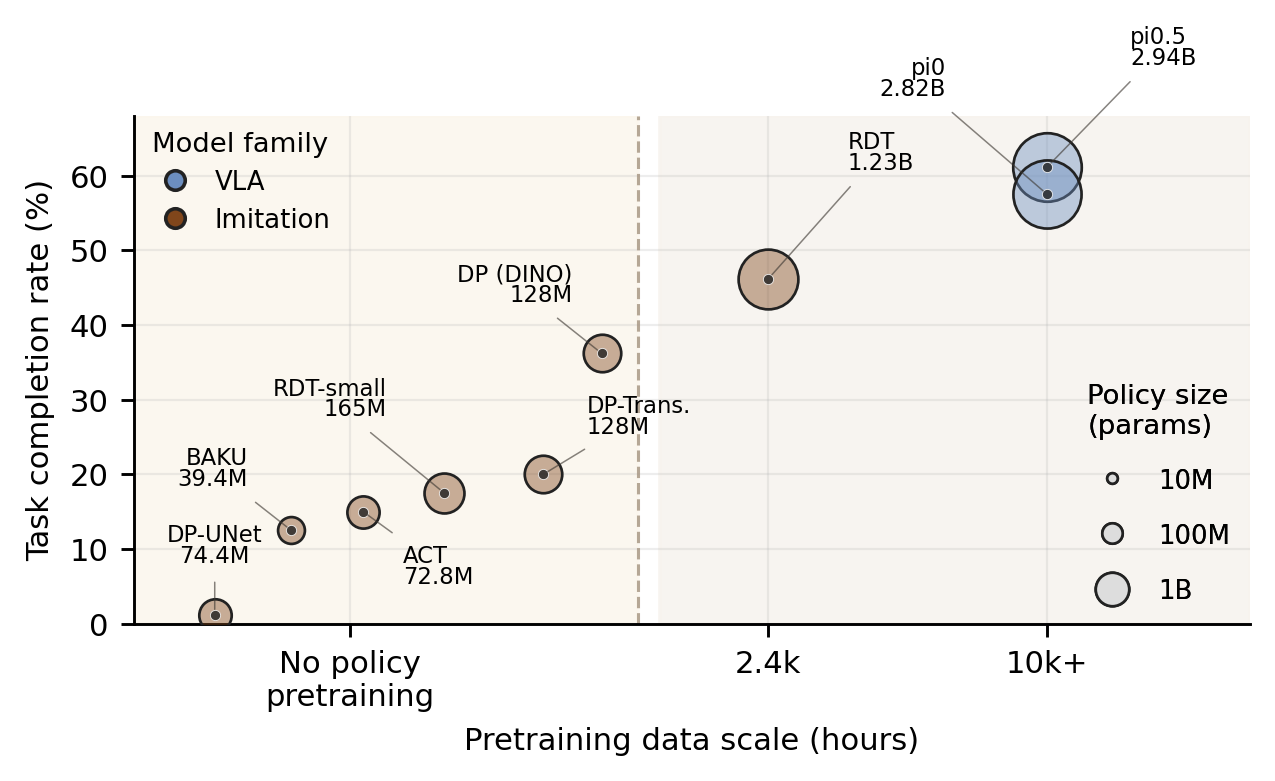

Policy results are measured over 80 real-world primitive rollouts per model. Perception results use strict problem-level exact-match over 36 tabletop states, plus field-wise diagnostic accuracies. Full-system results are reported as three case-study trajectories with operational counters rather than as a hand-level leaderboard.

| Policy | Family | SP | DC | TF | DF | SPSR | TCR |

|---|---|---|---|---|---|---|---|

| π0.5 | VLA | 38 | 11 | 31 | 0 | ||

| π0 | VLA | 38 | 8 | 33 | 1 | ||

| RDT | Robot-pretrained | 24 | 13 | 40 | 3 | ||

| DP (DINO) | Task-trained | 21 | 8 | 48 | 3 | ||

| DP-Transformer | Task-trained | 11 | 5 | 46 | 18 | ||

| RDT-small | Task-trained | 11 | 3 | 59 | 7 | ||

| ACT | Task-trained | 8 | 4 | 67 | 1 | ||

| BAKU | Task-trained | 5 | 5 | 67 | 3 | ||

| DP-UNet | Task-trained | 1 | 0 | 79 | 0 |

| Harness | Perceiver | Overall | LS | TO | BI | CC | CB | RCI | OCI | SO | Avg |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Codex | GPT 5.5 | 31.5 | 72.2 | 80.6 | 100.0 | 61.5 | 45.8 | 62.5 | 35.4 | 76.2 | 66.8 |

| Codex | GPT 5.4 | 31.5 | 65.7 | 93.5 | 100.0 | 23.1 | 31.2 | 56.2 | 18.8 | 47.6 | 54.5 |

| Codex | GPT 5.4 mini | 25.9 | 56.5 | 94.4 | 99.1 | 33.3 | 14.6 | 29.2 | 18.8 | 47.6 | 49.2 |

| Claude Code | Opus 4.7 | 34.3 | 43.5 | 93.5 | 100.0 | 43.6 | 31.2 | 37.5 | 43.8 | 0.0 | 49.1 |

| Claude Code | Sonnet 4.6 | 25.0 | 46.3 | 88.0 | 100.0 | 23.1 | 10.4 | 29.2 | 22.9 | 14.3 | 41.8 |

| Claude Code | Haiku 4.5 | 13.9 | 47.2 | 68.5 | 91.7 | 35.9 | 12.5 | 25.0 | 18.8 | 0.0 | 37.4 |

| Gemini CLI | Gemini 3 Flash | 20.4 | 63.9 | 77.8 | 100.0 | 28.2 | 18.8 | 29.2 | 22.9 | 71.4 | 51.5 |

| Gemini CLI | Gemini 3.1 Flash L. | 10.2 | 27.8 | 73.1 | 94.4 | 28.2 | 12.5 | 22.9 | 14.6 | 0.0 | 34.2 |

Demos & Release

Policy-bench rollouts are released as compressed web videos with labels recovered from the original model-task-result filenames. The system-level release documents the GPT 5.5 + pi0 hand traces, state folders, wait branches, recovery dispatches, and primitive counters used in the paper's case studies.

Policy Bench Rollouts

Source recordings are 4K 60fps MOV files. The hosted videos are 960x540 H.264 MP4 previews with original filenames preserved for traceability.

Benchmark Data

1,470 physical policy demonstrations plus 36 agent-state problems with ground-truth state, route, and action labels.

Policy Evaluation

Physical primitive trials compare pi-series, RDT, diffusion-policy, ACT, and BAKU policies under SPSR and TCR.

Agent Evaluation

Agentic perception results over 36 tabletop states quantify strict state recovery and field-wise bottlenecks.

System Evaluation

Three GPT 5.5 + pi0 hand-level case studies expose wait branches, recovery dispatches, human-help, and primitive counters.

Resources

@misc{dexholdem2026,

title = {DexHoldem: Playing Texas Hold'em with Dexterous Embodied System},

author = {Chen, Feng and Chu, Tianzhe and Sun, Li and Zhou, Pei and Xu, Zhuxiu and Gao, Shenghua and Zhai, Yuexiang and Yang, Yanchao and Ma, Yi},

year = {2026},

url = {https://dexholdem.github.io/Dexholdem/}

}Author Contributions

Contributions are summarized for the project website. For a compact list of every author with profile links, see the dedicated author page.

View all authorsCo-proposed and led the project; designed the data-collection infrastructure; maintained the hardware; trained DP, RDT, and ACT; contributed to embodied-agent and perception-benchmark design; collected data; and built the project website.

Co-proposed the project; designed the data-collection infrastructure; led the embodied-agent and perception-benchmark design; and performed teleoperation.

Co-proposed the project; designed the data-collection infrastructure; trained Octo; and performed teleoperation.

Trained the pi-series and BAKU models; deployed and evaluated policy models and embodied agents; and performed teleoperation.

Designed the simulation component; deployed and evaluated embodied agents; and collected data.

Shenghua Gao, Yuexiang Zhai, Yanchao Yang, and Yi Ma provided project guidance and feedback. Yuexiang Zhai and Yi Ma also co-proposed the project.