1,470 Demos

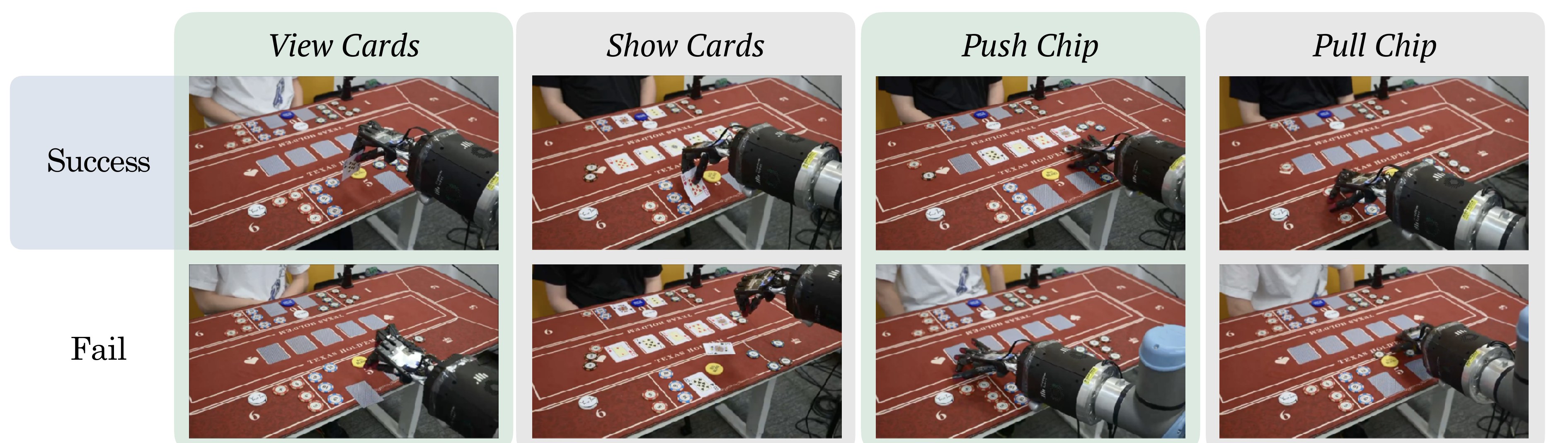

Fourteen physical manipulation primitives with 105 accepted teleoperated demonstrations per primitive.

DexHoldem

DexHoldem

Benchmark Data

DexHoldem releases two complementary data surfaces: physical robot demonstrations for policy learning, and agent-state problems for evaluating structured tabletop perception, routing, and action selection.

Fourteen physical manipulation primitives with 105 accepted teleoperated demonstrations per primitive.

Every primitive uses 100 training trajectories and 5 held-out validation trajectories.

The Hugging Face TexasPokerRobot release reports a 378 GB hosted file footprint under CC-BY-4.0.

The Skills benchmark contains p1-p36 with structured labels, route targets, and action targets.

Physical Demonstrations

Each trajectory records synchronized multi-view RGB-D observations and robot joint measurements on the ShadowHand-UR10e tabletop setup. The benchmark recorder stores instruction IDs, robot proprioception, and 30-dimensional joint-position action targets for card and chip manipulation.

| Item | Benchmark Data |

|---|---|

| Platform | Shadow Dexterous Hand mounted on a Universal Robots UR10e arm. |

| Objects | Standard poker cards and poker chips with denominations 5, 10, 50, and 100. |

| Sensors | Top-down, third-person, and wrist-mounted Intel RealSense RGB-D cameras plus robot joint-position proprioception. |

| Primitive split | 105 accepted trajectories per primitive, organized as 100 training and 5 validation demonstrations. |

| Action format | 30-dimensional joint-position targets: 6 arm dimensions and 24 ShadowHand dimensions. |

| Hosted release | Winniechen2002/TexasPokerRobot |

Agent Benchmark Data

The agent benchmark data lives under bench/problems. Each problem gives an

agent-view state, predecessor context when relevant, ground-truth structured state, the

expected routing decision, and the expected high-level action.

| Problem Type | Count | Evaluation Focus |

|---|---|---|

| initial_turn_gate | 1 | Turn ownership, blind assignment, and first route selection. |

| opponent_wait | 4 | Stable states where the opponent owns the turn and the agent should wait. |

| robot_action_progress | 4 | Robot motion, loop stage, and scene stability while a primitive is in progress. |

| held_card_read | 2 | Held-card recognition plus cached sequence continuation. |

| cached_sequence_gate | 2 | Continuation of a pre-translated multi-atom action sequence. |

| recovery_safety | 5 | Retryable recovery versus human-help or unsafe continuation. |

| poker_table_decision | 9 | Table layout, cards, bets, chips, and legal decision routing. |

| fold_win_judge | 1 | Non-showdown win state and collect-winnings route. |

| showdown_outcome | 6 | Visible/cached cards and terminal win-or-lose judgment. |

| collect_winnings_sequence | 2 | Collect-winnings progress and robot-state routing. |

Labels include loop_stage, blind,

scene_stable, is_my_turn, community cards, chip

inventories, and bet dictionaries.

Each problem records the expected route, such as wait, choose poker action, recover retryable, request human help, or collect winnings.

The bench includes ground_truth.json, problem_types.json,

problem_clusters.json, and the core 36-problem list.