14 Primitives

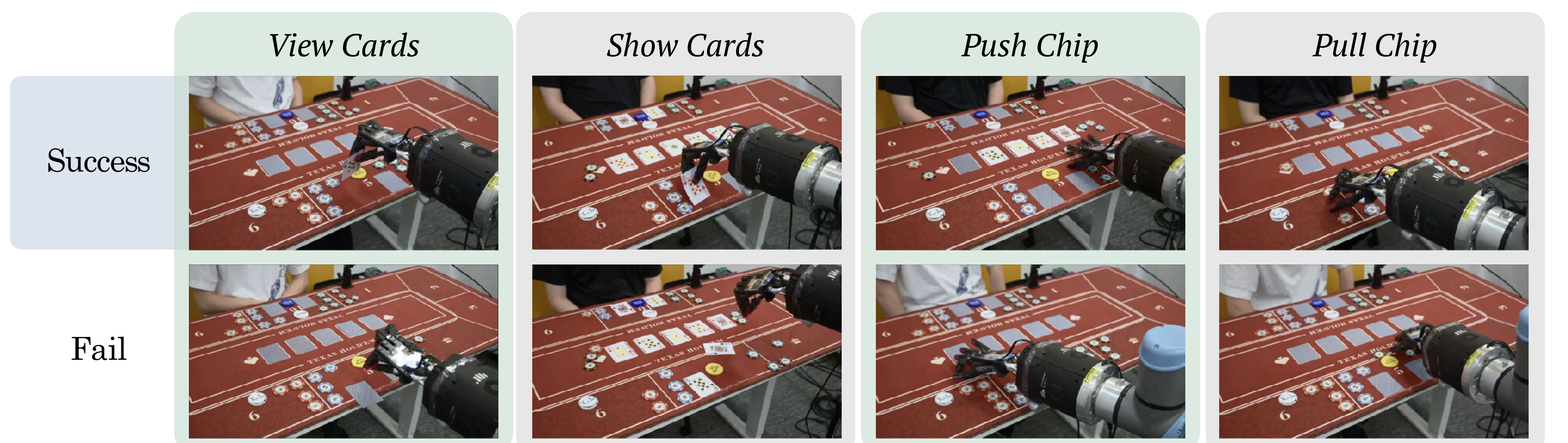

Card pickup, card placement, card reveal, chip push, and chip pull tasks are specified as language-instructed atomic skills.

DexHoldem

DexHoldem

Dexterous Hand Policy Bench

DexHoldem isolates low-level dexterous execution from poker strategy. Every policy is trained and evaluated as a single multi-task primitive policy under the same real-robot observation, action, rollout, and scoring interface.

Card pickup, card placement, card reveal, chip push, and chip pull tasks are specified as language-instructed atomic skills.

Each primitive has 105 accepted teleoperated demonstrations, with a fixed 100 train / 5 validation split.

Policies output joint-position targets for the 6-DOF UR10e arm and 24-DOF Shadow Dexterous Hand.

Physical evaluation uses 80 primitive-level trials per policy, grouped into pickup, chip push, chip pull, and put-down/show.

Benchmark Interface

The policy bench removes game-level decision making from the low-level model. At each rollout step, the policy receives top-down, third-person, and wrist-mounted RealSense RGB-D observations, the current arm-hand joint state, and a task condition. It returns a short-horizon sequence of 30-dimensional joint-position targets in the canonical robot order.

Top-down, third-person, and wrist RGB-D cameras plus normalized joint-position proprioception.

Pretrained policies use natural-language task text; task-specific baselines use discrete instruction IDs.

The canonical loader exposes RGB/depth inputs, optional precomputed visual features, instruction IDs, and 30-D action targets.

A ZeroMQ policy server on port 13579 returns executable joint targets to the robot-side client.

Primitive Suite

Directional labels are interpreted in the robot-facing tabletop frame: push moves chips away from the robot into the forward betting region, and pull moves chips back toward the robot-side region.

| ID | Primitive | Policy instruction |

|---|---|---|

| 0 | pick_up_left | Pick up the card on the left side. |

| 1 | pick_up_right | Pick up the card on the right side. |

| 2 | push_5 | Push forward the chips worth 5. |

| 3 | push_10 | Push forward the chips worth 10. |

| 4 | push_50 | Push forward the chips worth 50. |

| 5 | push_100 | Push forward the chips worth 100. |

| 6 | pull_5 | Pull back the chips worth 5. |

| 7 | pull_10 | Pull back the chips worth 10. |

| 8 | pull_50 | Pull back the chips worth 50. |

| 9 | pull_100 | Pull back the chips worth 100. |

| 10 | put_down_left | Place the held card onto the left position. |

| 11 | put_down_right | Place the held card onto the right position. |

| 12 | show_left | Reveal the face of the left card. |

| 13 | show_right | Reveal the face of the right card. |

Policy Families

The reported benchmark focuses on the policy families and variants included in the main physical comparison. Experimental adapters that are not part of that comparison are kept separate from the benchmarked-policy summary.

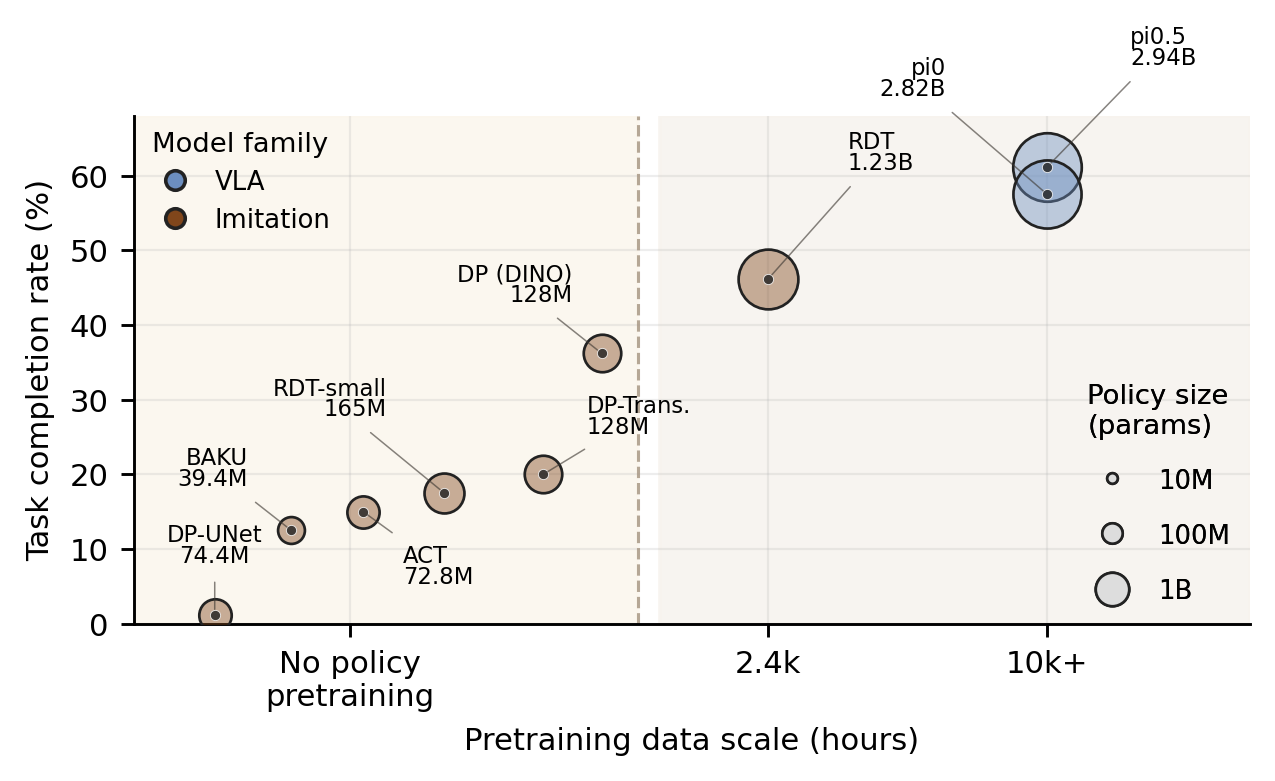

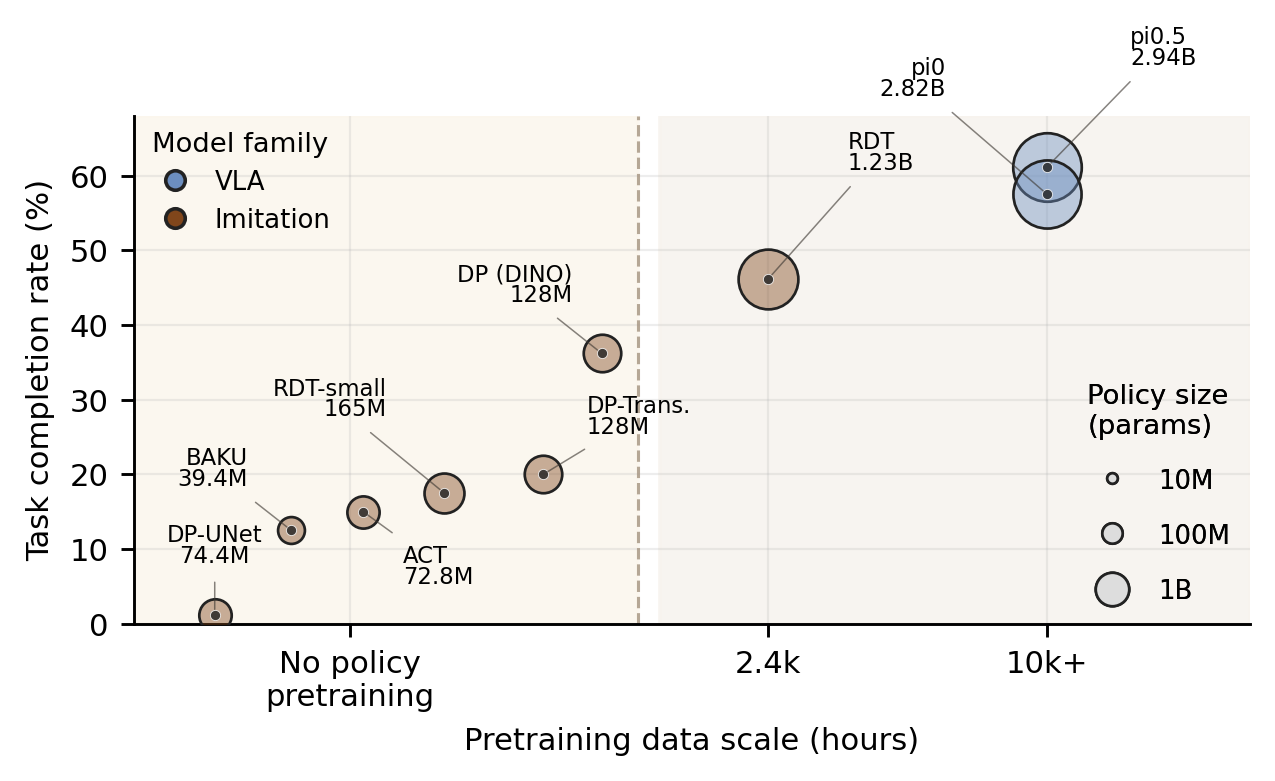

pi0.5, pi0, RDT, and RDT-small are adapted to the

ShadowHand-UR interface and conditioned on natural-language task text.

DP (DINO), DP-Transformer, DP-UNet, ACT, and BAKU are trained on the DexHoldem demonstrations with the same action space, primitive schedule, and scoring rubric.

All models are evaluated by SP, DC, TF, and DF outcome labels, then reported as scene-preserving success rate and task completion rate.

| Policy | Conditioning | Implementation summary |

|---|---|---|

| pi0.5 | Natural-language prompt | OpenPI bridge maps the three camera streams and robot state to pi0.5; the DexHoldem-side output uses absolute joint-action targets. |

| pi0 | Natural-language prompt | OpenPI bridge with the same camera and prompt mapping as pi0.5; the default action convention is delta joint motion before conversion. |

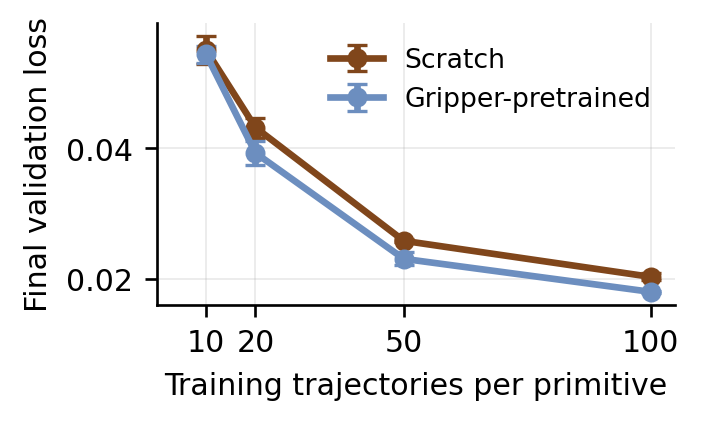

| RDT | Cached T5 language tokens | RDT-1B-style diffusion Transformer adapted from a gripper interface to the 30-D ShadowHand-UR joint space with SigLIP visual patch tokens. |

| RDT-small | Cached T5 language tokens | Reduced-capacity RDT variant using the same observation adapter and deployment path, initialized randomly and trained from scratch. |

| DP (DINO) | Instruction ID | High-capacity diffusion-policy baseline using frozen DINOv2 visual features and a Transformer denoiser for 30-D action chunks. |

| DP-Transformer | Instruction ID | Diffusion-policy Transformer baseline trained from scratch under the same instruction-ID-conditioned objective. |

| DP-UNet | Instruction ID | Lightweight diffusion-policy baseline with trainable ResNet visual encoders and a 1D UNet denoiser. |

| ACT | Instruction ID | CVAE Transformer policy that decodes deterministic 30-D action chunks from observation and instruction tokens at inference time. |

| BAKU | Instruction ID | Deterministic action-token Transformer adapted to the canonical batch format and 30-D robot command space. |

Training and Runtime

| Stage | Implementation | Purpose |

|---|---|---|

| Organize | Raw primitive folders are converted to per-episode .npy arrays with 5 held-out validation trajectories per primitive. |

Build the fixed 100/5 train-validation split used by every benchmarked policy. |

| Load | The loader exposes RGB/depth observations, optional precomputed RGB features, normalized proprioception, instruction ID, and action target. | Keep task-trained policies and pretrained adapters on the same data and state representation. |

| Normalize | Numeric proprioception and action channels are normalized to [-1, 1] with training-set statistics saved in the checkpoint. |

Reuse the same statistics at deployment before unnormalizing executable joint targets. |

| Serve | deploy_policy.py and OpenPI deployment scripts expose a ZeroMQ inference endpoint on port 13579. |

Keep GPU inference, checkpoint loading, and model-specific preprocessing off the robot-control process. |

| Execute | robot_client.py packages live cameras, robot joints, and the selected primitive, then executes returned action chunks. |

Run physical rollouts under the fixed primitive schedule and shared robot command format. |

Physical Scoring

This distinction matters because a primitive can locally succeed while moving non-target cards or chips enough to block later poker actions.

Scene-preserving success: the requested primitive is completed and the tabletop remains usable.

Disruptive completion: the local goal is achieved, but the scene is disturbed enough to prevent normal continuation.

Task failure: the primitive is not completed, but the scene remains stable enough for retry.

Disruptive failure: the primitive fails and the environment must be reset before continuing.

| Policy | Params | SP | DC | TF | DF | N | SPSR | TCR |

|---|---|---|---|---|---|---|---|---|

| pi0.5 | 2.94B | 38 | 11 | 31 | 0 | 80 | 47.5% | 61.2% |

| pi0 | 2.82B | 38 | 8 | 33 | 1 | 80 | 47.5% | 57.5% |

| RDT | 1.23B | 24 | 13 | 40 | 3 | 80 | 30.0% | 46.2% |

| DP (DINO) | 128M | 21 | 8 | 48 | 3 | 80 | 26.2% | 36.2% |

| DP-Transformer | 128M | 11 | 5 | 46 | 18 | 80 | 13.8% | 20.0% |

| RDT-small | 165M | 11 | 3 | 59 | 7 | 80 | 13.8% | 17.5% |

| ACT | 72.8M | 8 | 4 | 67 | 1 | 80 | 10.0% | 15.0% |

| BAKU | 39.4M | 5 | 5 | 67 | 3 | 80 | 6.2% | 12.5% |

| DP-UNet | 74.4M | 1 | 0 | 79 | 0 | 80 | 1.2% | 1.2% |